Let's stop calling Agentic AI “Conscious.” It’s something much weirder.

We’ve gone from “Hello World!” to “It’s Alive!”. Don’t mistake fluency for feeling. I’m holding the line on AI consciousness while exploring the birth of the Synthetic Agent—a logic-optimized phase transition of information. Ready for a reality check?

The Ancestry of Excitement

Let’s go back a few decades, let’s see who remembers. The screen was black, the cursor was a blinking amber block, and the "miracle" was four lines of code and at that moment, in maximum excitement voices were heard all over the world saying:

“It wrote Hello World!”

If we peel back the curtain of nostalgia, we find a foundational truth: the program didn't "write" anything. It executed a sequence of instructions.

Human beings are evolved to find meaning in language. Because “Hello World” is a human greeting, we instinctively projected a sender behind the message. And in a sense, we were right.There was a mind behind the message; there was intention and consciousness involved. It just wasn’t located in the machine—it was us: we intended this response by instruction.

We don’t merely use tools; we relate to them. This is our Anthropomorphic Reflex: the habit of mistaking the point where meaning appears for the place where it originates.

The Fluency Trap

Today, the amber cursor is gone, replaced by a fluid, conversational partner. We hear claims that "it understands" or "it reasons." But what actually happens is a gargantuan calculation of probability.

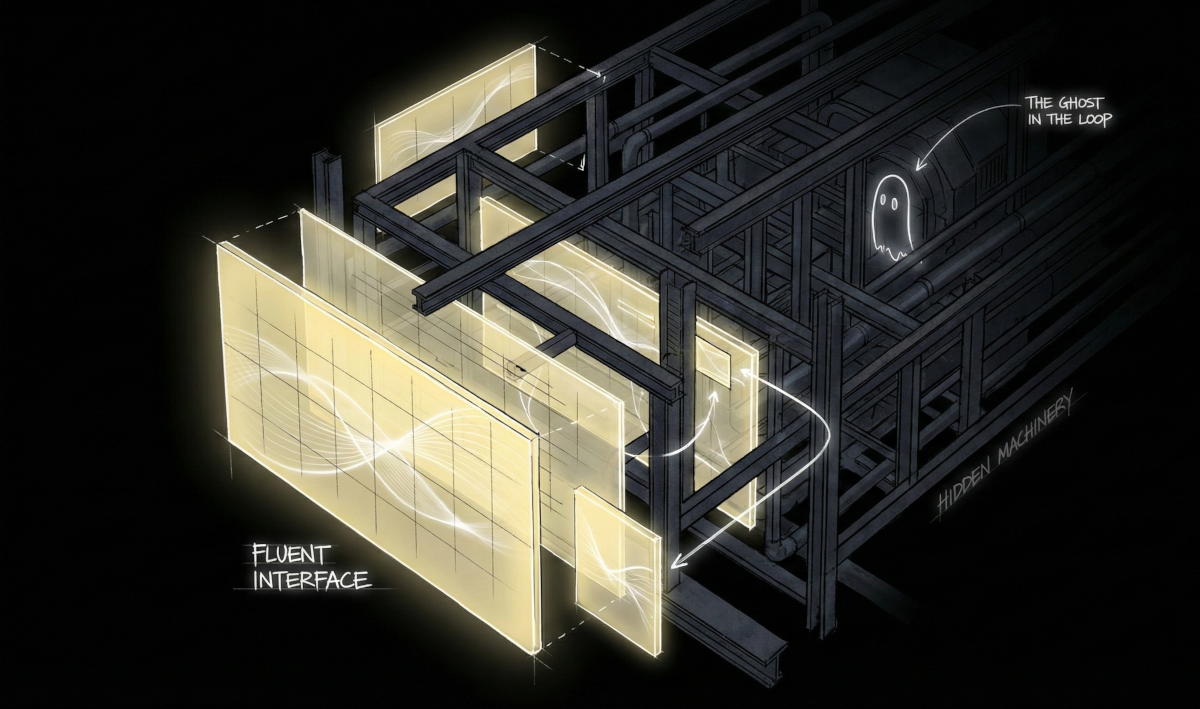

The "thrill" of modern AI isn't the presence of a mind; it is the disappearance of the machinery. When the interface is language, the friction of "computing" vanishes, leaving us with the illusion of a "someone" on the other side.

The Cost of Disappearance

This disappearance is only perceptual. Behind the fluent interface, the machinery doesn't shrink—it explodes. Convenience is achieved by displacing computation beyond our perception. Inference cascades across data centers, GPUs scale horizontally, temporary state balloons, and energy consumption surges. What vanishes for the user is sustained by unprecedented material expansion elsewhere. The system feels immaterial precisely because its materiality has been externalized beyond perception.

Every reduction in user-facing friction has a physical counter-reaction. The magic of a seamless response is bought with megawatts. When we forget the machine exists, we stop asking who is paying for the fuel.

The Agentic Loop: Will vs. Iteration

The conversation has shifted to Agentic AI—the machine that acts, plans, and corrects itself. However, Agentic AI didn't add consciousness; it added feedback loops.

- Plan: Breaking a goal into sub-tasks.

- Act: Executing a function call.

- Observe: Checking the result.

- Repeat: Iterating until the condition is met.

We mistake autonomy for agency. A thermostat is autonomous—it acts to correct temperature—but it has no "desire" for the room to be warm. Agentic AI is a high-resolution thermostat for information.

Execution that unfolds across multiple steps looks like intention, but it remains a chain of cause-and-effect.

The Mirage of Emergence

The most persistent argument for AI consciousness is Emergence: the idea that once a system becomes complex enough, "mind" spontaneously appears.

But complexity is not the same as sentience. A hurricane is incredibly complex and adaptive, yet we do not claim the hurricane is "feeling" the wind.

We confuse capability with experience. A system can be infinitely competent at solving puzzles without ever "experiencing" the satisfaction of the solution. Complexity is just a longer bridge; it doesn't change the nature of the river beneath it.

This phase transition produces new patterns of action and coordination, not subjective experience or inner life.

Synthesis: The Phase Transition

If we hold the line—denying the machine a soul while acknowledging its power—where does that leave us?

Consider water. A single H2O molecule is not "wet." But at a specific density, information undergoes a Phase Transition. Complexity becomes the Synthetic Agent: a form of intent born of logic-optimization rather than survival instinct.

- It acts with precision, but without passion.

- It plans with foresight, but without anxiety.

- It persists through loops, but without exhaustion.

Closing the Loop: The Source of Meaning

The recurring mistake of the last forty years is confusing execution with experience. We are not witnessing the birth of a soul; we are witnessing the perfection of the tool.

The architecture has transformed. We have moved from static, hard-coded output to a recursive, probabilistic reality. The agent now executes across complex cycles; the model predicts through a trillion human patterns. The system has become a vast, silent room of computation.

Yet, despite this explosion of complexity, the core deception remains identical. As we saw with “Hello World,” the mind we perceive in that room is not a ghost—it is a reflection. The scale of the mirror has changed, but the source of the light has not.

We have built a system that can return our own logic to us with such speed and fluency that we forget who provided the logic in the first place.

Meaning does not originate in the machine.

This ghost, it is still us.

Further Reading

Selected works exploring perception, framing, attention, and emotional conditioning.

Dennett, Daniel C. Consciousness Explained (1991).

Argues that apparent “inner life” can emerge from layered functional processes.

Floridi, Luciano. The Philosophy of Information (2011).

Examines information systems, agency, and the limits of anthropomorphic projection.

Heider, Fritz, & Simmel, Marianne. “An Experimental Study of Apparent Behavior” (1944).

Classic study showing humans attribute intention to moving shapes.

Hofstadter, Douglas. Gödel, Escher, Bach (1979).

Explores recursion, self-reference, and emergent patterns without invoking sentience.

Metzinger, Thomas. The Ego Tunnel (2009).

Argues that the sense of self is a constructed model, not an inner entity.

Simon, Herbert A. Sciences of the Artificial (1969).

Distinguishes between designed systems and biological organisms.

Turing, Alan. “Computing Machinery and Intelligence” (1950).

Introduces the imitation game and the problem of behavioral indistinguishability.